Innovation in the Age of Regulation: Building AI with Federated Learning

The rapid advancements in the AI industry over the last year has brought on fervent discussions around the growing need to regulate such technology. The European Union has enacted a few notable technology regulations over the past decade, the most recent being the EU Data Act of 2022, which sought to improve data privacy for sensitive information. A new act, currently titled the EU Artificial Intelligence Act, will ban products that are considered high risk and unethical for public consumption.

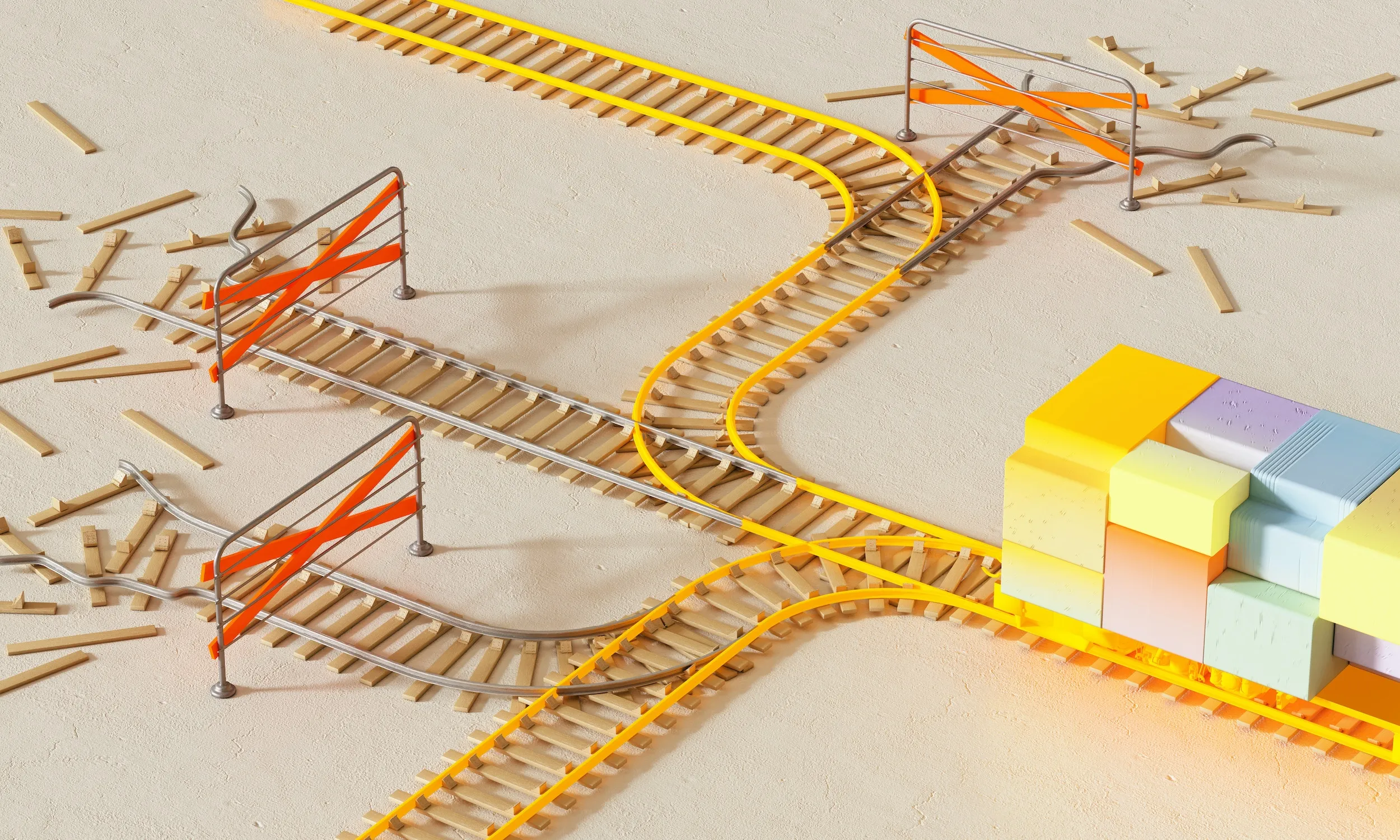

With the rise of data privacy and AI regulation, how can companies embrace the innovative aspects of AI, while protecting consumer data? How can we make advancements with AI in healthcare, financial and telecommunications without actually touching the sensitive data that is required to train machine learning models?

In classical machine learning, to train models using data from multiple clients (for example, patient data stored in different locations, or health data saved across different devices), the data must travel from each device to a central compute engine.

.webp)

This means that the data no longer belongs solely to the original owner of the data. In some industries, such as healthcare, there are legal guidelines in place to prevent such a transfer, making it impossible to train a model using classical machine learning.

With federated learning, however, the model travels to the data. Rather than sending the data to a central server or compute engine, the server relays model weights in order to train the model. The data stays with the original owner, and the model is trained using the compute from the device containing the data.

.webp)

This means that we can train models without even touching personal data. The data is never transferred off of the device for the model training process, and the model weights that are sent back and forth to the server contain no reference to individual data points that were used to determine the final parameters.

The power of federated learning is two-fold: it not only allows us to innovate in the current landscape of regulation, but it also allows us to prioritize the ethical implications of using our personal data to train these powerful models.

We here at KUNGFU.AI have experience with building AI products leveraging federated learning in order to meet industry guidelines and ethical compliance requirements. To learn more about how we can help you get started, review our whitepaper on how we helped our client, Clairity, build a cancer prediction model using federated learning.

.webp)