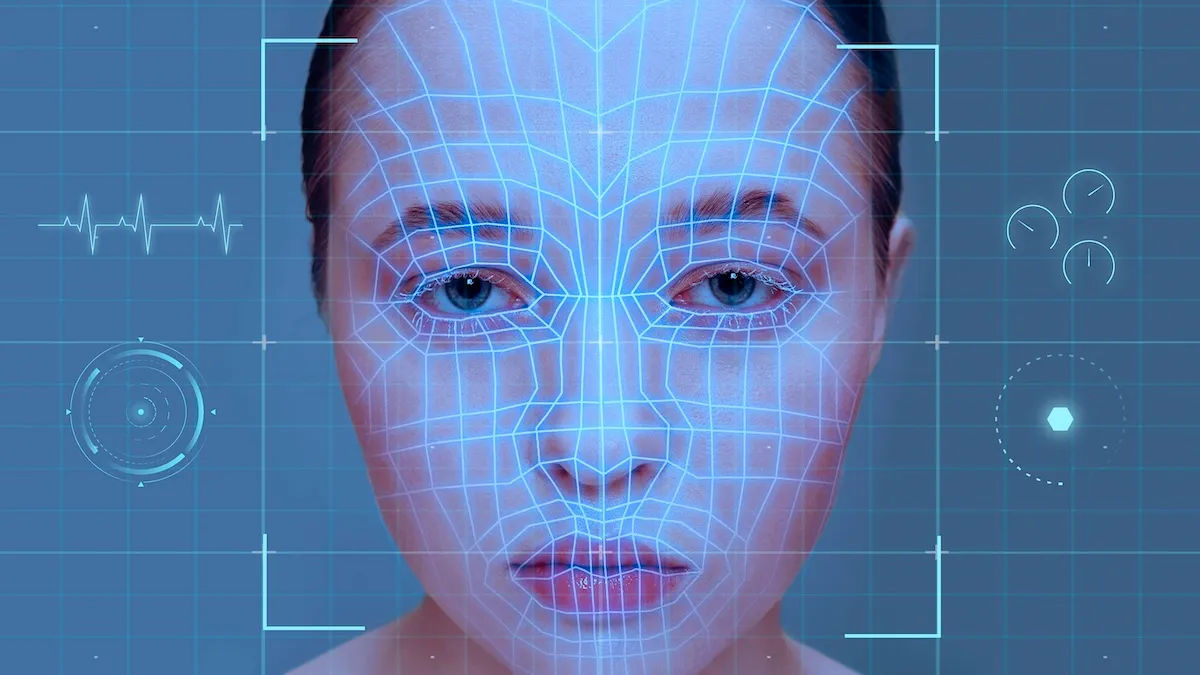

KUNGFU.AI Updates Ethical Pledge on Facial Recognition

Here at KUNGFU.AI, we have a passion for finding and building fantastic AI strategies and solutions for our clients, and also for ensuring (as best we can) that our work on AI is a positive force in the world. We subject our potential projects to robust, open, and transparent conversations about ethics with our entire team, and we take pride in the fact that we turned away our first client for ethical reasons two days after our company launch. We work hard to put our money where our mouth is.

One area of particular ethical concern in the field of Artificial Intelligence is facial recognition. In 2020, KUNGFU.AI made our initial Ethical Pledge on Facial Recognition, based on the Safe Face Pledge. However, that pledge has since been sunsetted, and continuing to rely on it would be against its creators’ wishes. With so many changes in the field since, we also believe that this subject is worth revisiting, that our approach to it is worth refactoring, and that this conversation is worth having publicly.

Our New Pledge

We will not work on biometric recognition without the following safeguards:

- It will never be used on the general public, only a consenting group in a limited space.

- All data processing follows the principle of least privilege, and any party with access to biometric PII must have a defensible reason.

- No other tool could be reasonably substituted.

- No negative consequences result to a human without human validation.

- Our data scientists and machine learning engineers have free rein to ensure ethical data practices, including the sourcing of training data and the evaluation of the model for obvious bias before release.

- It serves a useful and pro-social purpose, not surveillance.

- We have a contractual guarantee that it will be used for no other purpose.

The Purpose of this Pledge

Why do we believe that there is a particular risk to facial recognition and other biometrics, compared to the general risks associated with AI? A fully comprehensive answer would be beyond any reasonable scope of this document, but:

- Face-related data is often collected in public or online, without direct consent.

- Facial recognition systems are frequently deployed without the knowledge or consent of individuals being monitored by the system.

- Face-related data cannot be meaningfully anonymized, increasing the impact of data breaches.

- Facial recognition systems are known to suffer from issues of bias, showing lower performance for underrepresented groups, and are knowingly applied anyway.

- Facial recognition systems are typically developed via transfer learning, meaning that even if the new dataset is clean they still inherit biases from their original training.

- Facial recognition is very present in science fiction, where it is also very inaccurately portrayed. This warps the perceptions of its capabilities and risks by both operators and subjects of the system, which mandates extra care in its design and development.

- Any facial recognition system built today will become useless or illegal if it does not comply with future regulations which are still very much in flux

For these reasons, we perceive a level of risk in facial recognition systems in particular that requires direct focus.

Why Change the Previous Pledge?

In 2020, we wrote:

“Furthermore, KUNGFU.AI hereby commits to not engage in any AI-based biometric (this means faces, but it also means voices, fingerprints, etc.) recognition of individuals” with “one explicit caveat”: “developing a model that answers the lowest level question of facial recognition -- identifying whether a collection of pixels contains a face at all.”

We also committed to the planks of the Safe Face Pledge, created by the Algorithmic Justice League (AJL):

“1) Show value for human dignity, life, and rights

- Do not contribute to facial recognition applications that risk human life

- Do not facilitate secret and discriminatory government service

- Mitigate law enforcement abuse of facial recognition

- Ensure your rules are being followed.

2) Address harmful bias

- Implement internal bias evaluation processes and support independent evaluation

- Submit models on the market for benchmark when available

3) Facilitate transparency

- Increase awareness of facial analysis technology used

- Enable external analysis of facial recognition technology on the market

4) Embed Safe Face pledge into business practice

- Modify legal documents to reflect value for human life, dignity, and rights

- Engage with stakeholders

- Provide details of Safe Face Implementation”

Those planks still sound pretty good to us! But while we still agree with the pledge broadly, we respect the fact that it has been sunsetted, and the reasoning behind that decision. The Algorithmic Justice League (AJL) is now primarily calling for industry-wide regulations, as they have found self-regulation to be systematically insufficient. We at KUNGFU.AI echo their support for reasonable and well-designed regulations. We also do not consider ourselves relieved of the obligation to regulate ourselves anyway.

Our prior pledge recused ourselves from any facial recognition conversation before it even began. While we are committed to our ideals, we believe it may be more materially impactful to educate our clients on the risks associated with facial recognition and to offer robust mitigation strategies. If we say “no”, our customer may simply go elsewhere, and the harm may be done, regardless of whether or not our hands remain clean. If we say “yes, if”, we open the possibility of reducing harm.

We also recognize, and do not deny, the potential pitfalls that any critical eye can spot by the end of that last paragraph. We commit to continuously strengthening, not loosening, our process of rigorous analysis of the risks of any potential project, particularly in this space, in order to avoid those pitfalls.

In addition, we recognize that, while facial recognition systems have been clearly shown to make immediate and substantially disparate impact, many of the risks of facial recognition are fundamentally shared by many AI systems, with a difference in degree but not in kind. We believe that the “yes, if” mentality applies to any work we do. Clear lines in the sand are satisfying, but complacency on the “safe” side of the line can mask the need for continuous strengthening of our risk mitigation process.

It’s also important to recognize that there are some use cases where facial recognition is consented to and considered a desirable solution by users.

We brainstormed the following scenarios where we might consider biometric recognition potentially acceptable:

- Combating celebrity deepfakes by recognizing whether the human face being portrayed is the actual person it claims to be, where the celebrities protected have opted-in.

- Automated tagging of athletes in game footage, or of congresspeople in C-SPAN records.

- Skin cancer assessment (with a physician in the loop).

While these projects are (for us, and for now) hypothetical, they demonstrate the potential for a project to exist that could (given the correct safeguards) convince us to say “well, this case is fine.”

At KUNGFU.AI, we have no interest in allowing ourselves to ever say “well, this case is fine” in practice, but leaving “we don’t do facial recognition” as a firm line in writing. That kind of say/do mismatch, where we allow our commitments to weaken in an unexamined way, would be in conflict with our values of being Open, Caring, and Trustworthy.

We believe strongly that this update to our facial recognition pledge is better aligned with our current thinking and today’s realities. We hope to encourage creators and consumers of facial recognition and other biometric technologies to take a more active stance in the safety of their systems. By discussing these matters in a public forum, and by being willing to modify our stance over time, we hope to both help change the norms in the technology industry as well as provide ourselves as a resource for addressing these issues to both technical and non-technical audiences. So please, watch this space, or contact us if you have any questions regarding this document, facial recognition, or our ethics practices in general.